Prepares IRT analysis for Conquest, TAM, or mirt

defineModel.RdFacilitates data analysis using the software Conquest, TAM, or mirt. It automatically

checks data for IRT consistency, generates Conquest syntax, label, anchor and data files or

corresponding TAM/mirt call for a single model specified by several arguments in R. Finally, an

R object is created which contain the required input for Conquest or TAM. To start the estimation,

call runModel with the argument returned by defineModel.

Usage

defineModel (dat, items, id, splittedModels = NULL,

irtmodel = c("1PL", "2PL", "PCM", "PCM2", "RSM", "GPCM", "GPCM.groups", "2PL.groups", "GPCM.design", "3PL"),

qMatrix=NULL, DIF.var=NULL, HG.var=NULL, group.var=NULL, weight.var=NULL, anchor = NULL,

domainCol=NULL, itemCol=NULL, valueCol=NULL, catCol = NULL, check.for.linking = TRUE, minNperItem = 50, removeMinNperItem = FALSE,

boundary = 6, remove.boundary = FALSE, remove.no.answers = TRUE, remove.no.answersHG = TRUE,

remove.missing.items = TRUE, remove.constant.items = TRUE, remove.failures = FALSE,

remove.vars.DIF.missing = TRUE, remove.vars.DIF.constant = TRUE,

verbose=TRUE, software = c("conquest","tam", "mirt"), dir = NULL, analysis.name,

schooltype.var = NULL, model.statement = "item", compute.fit = TRUE,

pvMethod = c("regular", "bayesian"), fitTamMmlForBayesian = TRUE,

n.plausible=5, seed = NULL, conquest.folder= NULL,

constraints=c("cases","none","items"), std.err=c("quick","full","none"), distribution=c("normal","discrete"),

method=c("gauss", "quadrature", "montecarlo", "quasiMontecarlo"), n.iterations=2000,

nodes=NULL, p.nodes=2000, f.nodes=2000,converge=0.001,deviancechange=0.0001,

equivalence.table=c("wle","mle","NULL"), use.letters=FALSE,

allowAllScoresEverywhere = TRUE, guessMat = NULL, est.slopegroups = NULL,

fixSlopeMat = NULL, slopeMatDomainCol=NULL, slopeMatItemCol=NULL, slopeMatValueCol=NULL,

progress = NULL, Msteps = NULL, increment.factor=1 , fac.oldxsi=0,

export = list(logfile = TRUE, systemfile = FALSE, history = TRUE,

covariance = TRUE, reg_coefficients = TRUE, designmatrix = FALSE))Arguments

- dat

A data frame containing all variables necessary for analysis.

- items

Names or column numbers of variables with item responses. Item response values must be numeric (i.e. 0, 1, 2, 3 ... ). Character values (i.e. A, B, C ... or a, b, c, ...) are not allowed. Class of item columns are expected to be numeric or integer. Columns of class

characterwill be transformed.- id

Name or column number of the identifier (ID) variable.

- splittedModels

Optional: Object returned by

splitModels. Definition for multiple model handling.- irtmodel

If

software = "conquest", the argument is ignored as the IRT model is specified with themodel.statementargument. Ifsoftware = "tam", the argumentirtmodelcorresponds to theirtmodelargument oftam.mml. See the help page oftam.mmlfor further details. Ifsoftware = "mirt",irtmodelis a data.frame with two columns. First column: item identifier. Second column: Type of response model for this item. See theitemtypeargument in the help file ofmirtfor further details. Additionally, see example 10 below for specifying a mixed model with Rasch-, 2pl, partial credit, and generalized partial credit items.- qMatrix

Optional: A named data frame indicating how items should be grouped to dimensions. The first column contains the unique names of all items and should be named “item”. The other columns contain dimension definitions and should be named with the respective dimension names. A positive value (e.g., 1 or 2 or 1.4) indicates the loading weight with which an item loads on the dimension, a value of 0 indicates that the respective item does not load on this dimension. If no q matrix is specified by the user, an unidimensional structure is assumed.

- DIF.var

Name or column number of one grouping variable for which differential item functioning analysis is to be done.

- HG.var

Optional: Names or column numbers of one or more context variables (e.g., sex, school). These variables will be used for latent regression model in Conquest or TAM.

- group.var

Applies only if

software = "tam". Optional: Optional: Names or column numbers of one or more grouping variables. Descriptive statistics for WLEs and Plausible Values will be computed separately for each group in Conquest.- weight.var

Optional: Name or column number of one weighting variable.

- anchor

Optional: A named data frame with anchor parameters. If the data.frame only has two columns, the columns do not need to be named. In this case, the item name should be in the first column. The second column contains anchor parameters. Anchor items can be a subset of the items in the dataset and vice versa. If the data frame contains more than two columns, columns must be named explicitly using the following arguments

domainCol,itemCol,valueCol, andcatCol.- domainCol

Optional: Only necessary if the

anchorargument was used to define anchor parameters. Moreover, specifyingdomainColis only necessary, if the item identifiers inanchorare not unique—for example, if a specific item occurs with two parameters, one domain-specific item parameter and one additional “global” item parameter. The domain column than must specify which parameter belongs to which domain.- itemCol

Optional: Only necessary if the

anchorargument was used to define anchor parameters. Moreover, specifyingitemColis only necessary, if theanchordata frame has more than two columns. TheitemColcolumn then must specify which column contains the item identifier.- valueCol

Optional: Only necessary if the

anchorargument was used to define anchor parameters. Moreover, specifyingvalueColis only necessary, if theanchordata frame has more than two columns. ThevalueColcolumn then must specify which column contains the item parameter values.- catCol

Optional: Only necessary if the

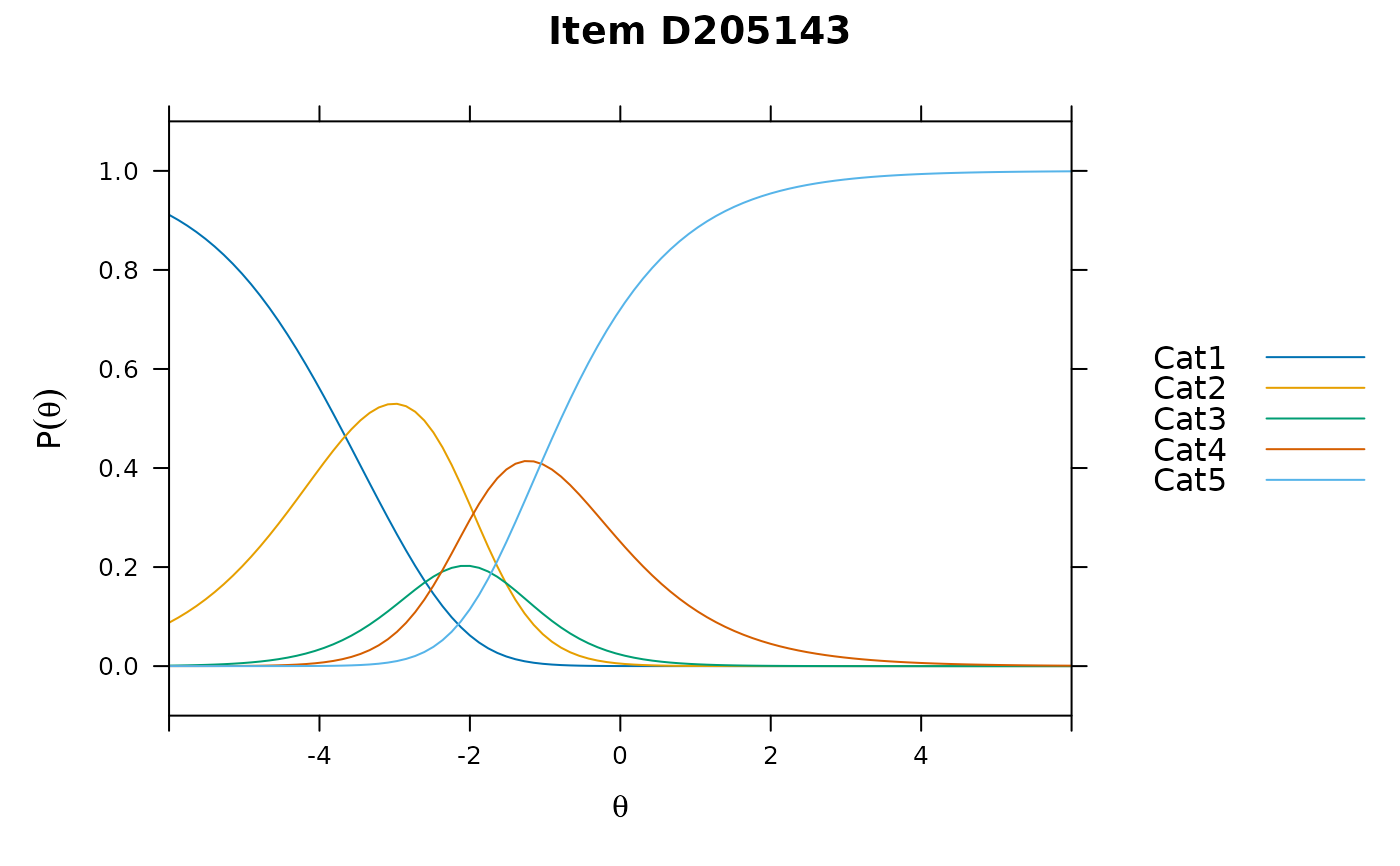

anchorargument was used to define anchor parameters in a partial credit model. ThecatColcolumn then must specify which column contains the item parameter category indices. The values in this column must be named according to the convention"Cat1","Cat2","Cat3", and so on.- check.for.linking

A logical value indicating whether the items in dataset are checked for being connected with each other via design.

- minNperItem

Numerical: A message is printed on console if an item has less valid values than the number defined in

minNperItem.- removeMinNperItem

Logical: Remove items with less valid responses than defined in

minNperItem?- boundary

Numerical: A message is printed on console if a subject has answered less than the number of items defined in boundary.

- remove.boundary

Logical: Remove subjects who have answered less items than defined in the

boundaryargument?- remove.no.answers

Logical: Should persons without any item responses being removed prior to analysis?

- remove.no.answersHG

Logical: Should persons without any responses on any background variable being removed prior to analysis?

- remove.missing.items

Logical: Should items without any item responses being removed prior to analysis?

- remove.constant.items

Logical: Should items without variance being removed prior to analysis?

- remove.failures

Logical: Should persons without any correct item response (i.e., only “0” responses) being removed prior to analysis?

- remove.vars.DIF.missing

Logical: Applies only in DIF analyses. Should items without any responses in at least one DIF group being removed prior to analyses? Note: Conquest may crash if these items remain in the data.

- remove.vars.DIF.constant

Logical: Applies only in DIF analyses. Should items without variance in at least one DIF group being removed prior to analyses? Note: Conquest may crash if these items remain in the data.

- verbose

A logical value indicating whether messages are printed on the R console.

- software

The desired estimation software for the analysis.

- dir

The directory in which the output will be written to. If

software = "conquest",dirmust be specified. Ifsoftware = "tam",diris not mandatory.- analysis.name

A character string specifying the analysis name. If

software = "conquest",analysis.namemust be specified. All Conquest input and output files will namedanalysis.namewith their corresponding extensions. Ifsoftware = "tam",analysis.nameis not mandatory. In the case of multiple models estimation,split.modelsautomatically definesanalysis.namefor each model.- schooltype.var

Optional: Name or column number of the variable indicating the school type (e.g. academic track, non-academic track). Only necessary if p values should be computed for each school type separately.

- model.statement

Optional: Applies only if

software = "conquest". A character string given the model statement in the Conquest syntax. If omitted, the statement is generated automatically with respect to the defined model.- compute.fit

Applies only if

software = "conquest". Compute item fit statistics?- pvMethod

Applies only if

software = "tam": Specifies whether PVs should be drawn regularly or using a Bayesian algorithm.- fitTamMmlForBayesian

Logical, applies only if

software = "tam": If PVs are drawn using a Bayesian algorithm, it is not necessary to fit the model viatam.mmlbefore.fitTamMmlForBayesianspecifies whether the model should be fitted before though. See the help page oftam.pv.mcmcfor further details.- n.plausible

The number of plausible values which are to be drawn from the conditioning model.

- seed

Optional: Set seed value for analysis. The value will be used in Conquest syntax file ('set seed'-statement, see conquest manual, p. 225) or in TAM (control$seed). Note that seed only occurs for stochastic integration.

koennen

- conquest.folder

Applies only if

software = "conquest". A character string with path and name of the Conquest console, for example"c:/programme/conquest/console_Feb2007.exe". In package version 0.7.24 and later, conquest executable file is included in the package, so the user needn't to specify this argument.- constraints

A character string specifying how the scale should be constrained. Possible options are

"cases"(default),"items"and"none". When anchor parameter are specified in anchor, constraints will be set to"none"automatically. InTAMthe option"none"is not allowed. (See the help file oftam.mmlfor further details.)- std.err

Applies only if

software = "conquest". A character string specifying which type of standard error should be estimated. Possible options are"full","quick"(default) and"none". See Conquest manual pp.167 for details on standard error estimation.- distribution

Applies only if

software = "conquest". A character string indicating the a priori trait distribution. Possible options are"normal"(default) and"discrete". See Conquest manual pp.167 for details on population distributions.- method

A character string indicating which method should be used for numerical or stochastic integration. Possible options are

"gauss"(Gauss-Hermite quadrature: default),"quadrature"(Bock/Aitken quadrature) and"montecarlo". See Conquest manual pp.167 for details on these methods. When usingsoftware = "tam","gauss"and"quadrature"essentially leads to numerical integration, i.e TAM is called withcontrol$snodes = 0and withcontrol$nodes = seq(-6,6,len=nn), wherennequals the number of nodes specified in thenodesargument ofdefineModel(see below). Whether or notcontrol$QMCis set to TRUE or FALSE, depends on whether"montecarlo"or"quasiMontecarlo"is chosen."montecarlo"leads to calling TAM withcontrol$QMC = FALSEandsnodes = nn, wherennequals the number of nodes specified in thenodesargument ofdefineModel."quasiMontecarlo"leads to calling TAM withcontrol$QMC = TRUEandsnodes = nn, wherennequals the number of nodes specified in thenodesargument ofdefineModel. To met thesoftware = "tam"default (Quasi Monte Carlo integration withcontrol$QMC = TRUE), usesoftware="tam", nodes = 21, method = "quasiMontecarlo".- n.iterations

An integer value specifying the maximum number of iterations for which estimation will proceed without improvement in the deviance.

- nodes

An integer value specifying the number of nodes to be used in the analysis. The default value is 20. When using

software = "tam", the value specified here leads to calling TAM withnodes = 20ANDsnodes = 0if"gauss"or"quadrature"or"quasiMontecarlo"was used in themethodargument. If"montecarlo"was used in themethodargument, the value specified here leads to calling TAM withcontrol$snodes = 20. For numerical integration, for example,method = "gauss"andnodes = 21(TAM default) may be appropriate. For quasi monte carlo integration,method = "quasiMontecarlo"andnodes = 1000may be appropriate (TAM authors recommend to use at least 1000 nodes).- p.nodes

Applies only if

software = "conquest". An integer value specifying the number of nodes that are used in the approximation of the posterior distributions, which are used in the drawing of plausible values and in the calculation of EAP estimates. The default value is 2000.- f.nodes

Applies only if

software = "conquest". An integer value specifying the number of nodes that are used in the approximation of the posterior distributions in the calculation of fit statistics. The default value is 2000.- converge

An integer value specifying the convergence criterion for parameter estimates. The estimation will terminate when the largest change in any parameter estimate between successive iterations of the EM algorithm is less than converge. The default value is 0.001.

- deviancechange

An integer value specifiying the convergence criterion for the deviance. The estimation will terminate when the change in the deviance between successive iterations of the EM algorithm is less than deviancechange. The default value is 0.0001.

- equivalence.table

Applies only if

software = "conquest". A character string specifying the type of equivalence table to print. To be more precise, the person estimator that is to be used to create the table must be specified here. Possible options are"wle"(default),"mle"and NULL. If NULL, no equivalence table is computed at all.- use.letters

Applies only if

software = "conquest". A logical value indicating whether item response values should be coded as letters. This option can be used in partial credit models comprising items with more than 10 categories to avoid response columns with width 2 in Conquest.- allowAllScoresEverywhere

Applies only if

software = "Conquest". Defines score statement generation in multidimensional polytomous models. Consider two dimensions, `reading' and `listening'. In `reading', values 0, 1, 2, 3 occur. In `listening', values 1, 2, 3, 4 occur. IfTRUE, values 0, 1, 2, 3, 4 are defined for both dimensions. Otherwise, values 0, 1, 2, 3 are defined for `reading', values 1, 2, 3, 4 are defined for `listening'.- guessMat

Applies only if

software = "tam"for 3PL models. A named data frame with two columns indicating for which items a common guessing parameter should be estimated. The first column contains the names of all items in the analysis and should be named"item". The second column is numerical (integer values recommended) and allocates the items to groups. For each group of items, a separate guessing parameter is estimated. If the value in the second columns equals zero, the guessing parameter is fixed to zero.- est.slopegroups

Applies only if

software = "tam"for 2PL models. Optionally, a named data frame with two columns indicating for which items a common discrimination parameter should be estimated. The first column contains the names of all items in the analysis and should be named"item". The second column is numerical (integer values recommended) and allocates the items to groups. For each group of items, a separate discrimination parameter is estimated. Without specifyingest.slopegroups, a discrimination parameter for each item is estimated.- fixSlopeMat

Applies only if

software = "tam"for 2PL models. Optionally, a named data frame with two columns indicating for which items a fixed discrimination should be assumed. The first column contains the names of the items which discrimination should be fixed. Note that item indicators should be unique—if not, use further argumentsslopeMatDomainCol,slopeMatItemColandslopeMatValueCol. The second column is numerical and contains the discrimination value. Note: To date, this works only for between item dimensionality models. Within item dimensionality models must be specified directly in TAM, using theB.fixedargument oftam.mml. Items which discrimation should be estimated should not occur in this data frame.- slopeMatDomainCol

Optional: Only necessary if the

fixSlopeMatargument was used to define fixed slope parameters. Moreover, specifyingslopeMatDomainColis only necessary, if the item identifiers infixSlopeMatare not unique—for example, if a specific item occurs with two slope parameters, one domain-specific item slope parameter and one additional “global” item parameter. The domain column than must specify which parameter belongs to which domain.- slopeMatItemCol

Optional: Only necessary if the

fixSlopeMatargument was used to define fixed slope parameters. Moreover, specifyingitemColis only necessary, if thefixSlopeMatdata frame has more than two columns. TheitemColcolumn then must specify which column contains the item identifier.- slopeMatValueCol

Optional: Only necessary if the

fixSlopeMatargument was used to define slope parameters. Moreover, specifyingvalueColis only necessary, if thefixSlopeMatdata frame has more than two columns. ThevalueColcolumn than must specify which column contains the item parameter values.- progress

Logical: Applies only if

software = "tam"orsoftware = "mirt". Print estimation progress messages on console? IfNULL(the default), progress is printed forsoftware = "mirt", but not forsoftware = "tam".- Msteps

Number of M steps for item parameter estimation. A high value of M steps could be helpful in cases of non-convergence. The default value is 4; the default for 3pl models is set to 10.

- increment.factor

Applies only if

software = "tam". Should only be varied if the model does not converge. See help page oftam.mmlfor further details.- fac.oldxsi

Applies only if

software = "tam". Should only be varied if the model does not converge. See help page oftam.mmlfor further details.- export

Applies only if

software = "conquest". Specifies which additional files should be written on hard disk.

Value

A list which contains information about the desired estimation. The list is intended for

further processing via runModel. Structure of the list varies depending on

whether multiple models were called using splitModels or not. If

splitModels was called, the number of elements in the list equals

the number of models defined via splitModels. Each element in the list is

a list with various elements:

- software

Character string of the software which is intended to use for the further estimation, i.e.

"conquest"or"tam"- qMatrix

The Q matrix allocating items to dimensions.

- all.Names

Named list of all relevant variables of the data set.

- dir

Character string of the directory the results are to be saved.

- analysis.name

Character string of the analysis' name.

- deskRes

Data frame with descriptives (e.g., p values) of the test items.

- discrim

Data frame with item discrimination values.

- perNA

The person identifiers of examinees which are excluded from the analysis due to solely missing values.

- per0

The person identifiers of examinees which have solely false responses. if

remove.failueswas set to be TRUE, these persons are excluded from the data set.- perA

The person identifiers of examinees which have solely correct responses.

- perExHG

The person identifiers of examinees which are excluded from the analysis due to missing values on explicit variables.

- itemsExcluded

Character string of items which were excluded, for example due to zero variance or solely missing values.

If software == "conquest", the output additionally includes the following elements:

- input

Character string of the path with Conquest input (cqc) file.

- conquest.folder

Character string of the path of the conquest executable file.

- model.name

Character string of the model name.

If software == "tam", the output additionally includes the following elements:

- anchor

Optional: data frame of anchor parameters (if anchor parameters were defined).

- daten

The prepared data for TAM analysis.

- irtmodel

Character string of the used IRT model.

- est.slopegroups

Applies for 2pl modeling. Information about which items share a common slope parameter.

- guessMat

Applies for 3pl modeling. Information about which items share a common guessing parameter.

- control

List of control parameters for TAM estimation.

- n.plausible

Desired number of plausible values.

Examples

################################################################################

### Preparation: necessary for all examples ###

################################################################################

# load example data

# data set 'trends' contains item response data for three measurement occasions

# in a single data.frame

data(trends)

# second, create the q matrix from long format data

qMat <- unique(trends[ ,c("item","domain")])

qMat <- data.frame ( qMat[,"item", drop=FALSE], model.matrix(~domain-1, data = qMat))

################################################################################

### Example 1: Unidimensional Rasch Model ###

################################################################################

# Example 1: define and run a unidimensional Rasch model for the 2010 cohort

# with all variables from booklet 02 (this booklet contains reading and listening

# items together). For the estimation, Conquest is used.

# Since the wide-format dataset generated here will be used in later analyses,

# several covariates - such as gender and SES - are generated at the same time.

# These variables are not yet used in this example.

datW <- subset(trends, booklet == "Bo02" & year == 2010) |>

reshape2::dcast(idstud+language+sex+ses+country~item, value.var = "value")

# defining the unidimensional model: specifying q matrix is not necessary

mod1 <- defineModel(dat=datW, items= -c(1:5), id="idstud", analysis.name = "unidim", dir = tempdir())

#> Cannot find conquest 2007 executable file. Please choose manually.

#> Error in file.choose(): file choice cancelled

# run the model

run1 <- runModel(mod1)

#> Error: object 'mod1' not found

# get the results

res1 <- getResults(run1)

#> Error: object 'run1' not found

# extract the item parameters from the results object

item1<- itemFromRes(res1)

#> Error: object 'res1' not found

################################################################################

### Example 1a: Unidimensional Rasch Model with DIF estimation ###

################################################################################

# nearly the same procedure as in example 1. Using 'sex' as DIF variable

# note that 'sex' is a factor variable here. Conquest needs all explicit variables

# to be numeric. Variables will be automatically transformed to numeric by

# 'defineModels'. However, it might be the better idea to transform the variable

# manually.

datW[,"sexNum"] <- car::recode ( datW[,"sex"] , "'male'=0; 'female'=1", as.factor = FALSE)

# as we have defined a new variable ('sexNum') in the data, it is a good idea

# to explicitly specify item columns ... instead of saying 'items= -c(1:5)' which

# means: Everything except column 1 to 5 are item columns

items<- grep("^T[[:digit:]]{2}", colnames(datW))

mod1a<- defineModel(dat=datW, items= items, id="idstud", DIF.var = "sexNum",

analysis.name = "unidimDIF", dir = tempdir())

#> Cannot find conquest 2007 executable file. Please choose manually.

#> Error in file.choose(): file choice cancelled

# run the model

run1a<- runModel(mod1a)

#> Error: object 'mod1a' not found

# get the results

res1a<- getResults(run1a)

#> Error: object 'run1a' not found

################################################################################

### Example 1b: Rasch Model with DIF variable in customized model statement ###

################################################################################

# builing on example 1a, the DIF variable should be used without interaction term

# and without eliminating items which do not vary within DIF groups. We need the

# 'sexNum' variable which was defined previously

# Example based on 'model 6' in 'r:\Nawi\main\10_Studien\Auswertung\Alex Robitzsch R-Skripte\skalierung_conquest_mit_R_04.R'

items<- grep("^T[[:digit:]]{2}", colnames(datW))

mod1b<- defineModel(dat=datW, items= items, id="idstud", model.statement = "item + sexNum",

analysis.name = "unidimCustom", dir = tempdir())

#> Cannot find conquest 2007 executable file. Please choose manually.

#> Error in file.choose(): file choice cancelled

# run the model

run1b<- runModel(mod1b)

#> Error: object 'mod1b' not found

# get the results

res1b<- getResults(run1b)

#> Error: object 'run1b' not found

# item parameter

it1b <- itemFromRes(res1b)

#> Error: object 'res1b' not found

################################################################################

### Example 2a: Multidimensional Rasch Model with anchoring ###

################################################################################

# Example 2a: running a multidimensional Rasch model on a subset of items with latent

# regression. Use item parameter from the first model as anchor parameters

# read in anchor parameters from the results object of the first example

aPar <- itemFromRes(res1)[,c("item", "est")]

#> Error: object 'res1' not found

# defining the model: specifying q matrix now is necessary.

# Please note that all latent regression variables have to be of class numeric.

# If regression variables are factors, dummy variables automatically will be used.

# (This behavior is equivalent as in lm() for example.)

mod2a<- defineModel(dat=datW, items= grep("^T[[:digit:]]{2}", colnames(datW)),

id="idstud", analysis.name = "twodim", HG.var = c("language","sex", "ses"),

qMatrix = qMat, anchor = aPar, n.plausible = 20,dir = tempdir())

#> Error: object 'aPar' not found

# run the model

run2a<- runModel(mod2a)

#> Error: object 'mod2a' not found

# get the results

res2a<- getResults(run2a)

#> Error: object 'run2a' not found

################################################################################

### Example 2b: Multidimensional Rasch Model with equating ###

################################################################################

# Example 2b: running a multidimensional Rasch model on a subset of items

# without anchoring. Defining the model: specifying q matrix now is necessary.

mod2b<- defineModel(dat=datW, items= grep("^T[[:digit:]]{2}", colnames(datW)),

id="idstud", analysis.name = "twodim2", qMatrix = qMat,

n.plausible = 20, dir = tempdir())

#> Cannot find conquest 2007 executable file. Please choose manually.

#> Error in file.choose(): file choice cancelled

# run the model

run2b <- runModel(mod2b)

#> Error: object 'mod2b' not found

# get the results

res2b<- getResults(run2b)

#> Error: object 'run2b' not found

### equating (wenn nicht verankert)

eq2b <- equat1pl( results = res2b, prmNorm = aPar, difBound=.64, iterativ=TRUE)

#> Error: object 'res2b' not found

### transformation to the 'bista' metric: needs reference population definition

### we use some arbitrary values here

ref <- data.frame ( domain = c("domainreading", "domainlistening"),

m = c(0.03890191, 0.03587727), sd= c(1.219, 0.8978912))

cuts <- list ( domainreading = list ( values = 390+0:3*75),

domainlistening = list ( values = 360+0:3*85))

tf2b <- transformToBista ( equatingList = eq2b, refPop = ref, cuts = cuts)

#> Error: object 'eq2b' not found

################################################################################

### Example 3: Multidimensional Rasch Model in TAM ###

################################################################################

# estimate model 2 with latent regression and anchored parameters in TAM

# specification of an output folder (via 'dir' argument) no longer necessary

mod3T<- defineModel(dat=datW, items= grep("^T[[:digit:]]{2}", colnames(datW)),

id="idstud", qMatrix = qMat, HG.var = "sex", anchor = aPar, software = "tam")

#> Error: object 'aPar' not found

# run the model

run3T<- runModel(mod3T)

#> Error: object 'mod3T' not found

# Object 'run2T' is of class 'tam.mml'

class(run3T)

#> Error: object 'run3T' not found

# the class of 'run2T' corresponds to the class defined by the TAM package; all

# functions of the TAM package intended for further processing (e.g. drawing

# plausible values, plotting deviance change etc.) work, for example:

wle <- tam.wle(run3T)

#> Error: object 'run3T' not found

# Finally, the model result are collected in a single data frame

res3T<- getResults(run3T)

#> Error: object 'run3T' not found

# repeat the same model with mirt

items<- grep("^T[[:digit:]]{2}", colnames(datW), value=TRUE)

irtM <- data.frame(item = items, task = substr(items,1,4), stringsAsFactors = FALSE) |> dplyr::mutate(irtmod = "Rasch")

mod3M<- defineModel(dat=datW, items= grep("^T[[:digit:]]{2}", colnames(datW)),id="idstud",

irtmodel = irtM[,c("item", "irtmod")], anchor = aPar, qMatrix = qMat, HG.var = "sex", software = "mirt")

#> Error: object 'aPar' not found

run3M<- runModel(mod3M)

#> Error: object 'mod3M' not found

res3M<- getResults(run3M)

#> Error: object 'run3M' not found

itM <- itemFromRes(res3M)

#> Error: object 'res3M' not found

################################################################################

### Example 4: define und run multiple models defined by 'splitModels' ###

################################################################################

# Example 4: define und run multiple models defined by 'splitModels'

# Model split is possible for different groups of items (i.e. domains) and/or

# different groups of persons (for example, federal states within Germany)

# define person grouping

pers <- data.frame ( idstud = datW[,"idstud"] , group1 = datW[,"sex"],

group2 = datW[,"country"], stringsAsFactors = FALSE )

# define 24 models, splitting according to person groups and item groups separately

# by default, multicore processing is applied

l1 <- splitModels ( qMatrix = qMat, person.groups = pers, nCores = 1)

#> ---------------------------------

#> splitModels: generating 24 models

#> ........................

#> see <returned>$models

#> number of cores: 1

#> ---------------------------------

# In this example, the sample sizes in the individual subgroups or models are far

# too small. The example is therefore intended solely to illustrate how the

# analysis can be broken down by item and respondent group.

table(datW[,c("sex", "country")])

#> country

#> sex countryA countryB countryC

#> female 42 27 20

#> male 32 35 16

# apply 'defineModel' for each of the 24 models in 'l1'

modMul<- defineModel(dat = datW, items = grep("^T[[:digit:]]{2}", colnames(datW)),

id = "idstud", check.for.linking = TRUE, splittedModels = l1, software = "tam")

#>

#> Specification of 'qMatrix' and 'person.groups' results in 24 model(s).

#> Warning: Model split preparation for model No. 1, model name

#> domainlistening__group1.female_group2.countryC: 118 items from 134 items listed

#> the Q matrix not found in data:

#> ℹ 'T12_11', 'T13_15', 'T15_03', 'T13_04', 'T13_07', 'T13_16', 'T13_01',

#> 'T15_02', 'T15_01', 'T15_15', 'T15_16', 'T15_09', 'T13_12', 'T13_13',

#> 'T15_14', 'T13_08', 'T13_06', 'T15_12', 'T13_10', 'T13_17', 'T15_11',

#> 'T13_05', 'T13_09', 'T15_10', 'T15_13', 'T15_17', 'T15_18', 'T13_18',

#> 'T16_08', 'T16_09', 'T16_11', 'T16_13', 'T16_01', 'T16_04', 'T16_10',

#> 'T16_02', 'T16_12', 'T16_06', 'T16_07', 'T19_06', 'T19_08', 'T19_05',

#> 'T19_11', 'T19_01', 'T19_02', 'T19_30', 'T19_03', 'T19_07', 'T19_04',

#> 'T19_09', 'T21_11', 'T18_01', 'T21_01', 'T18_05', 'T21_12', 'T18_13',

#> 'T21_10', 'T18_11', 'T21_05', 'T21_13', 'T18_08', 'T18_07', 'T21_09',

#> 'T18_04', 'T18_02', 'T21_02', 'T21_06', 'T18_09', 'T21_04', 'T18_12',

#> 'T21_14', 'T21_03', 'T18_10', 'T30_06', 'T29_04', 'T29_02', 'T30_02',

#> 'T30_03', 'T30_01', 'T29_05', 'T29_03', 'T28_08', 'T28_09', 'T28_02',

#> 'T28_03', 'T28_04', 'T28_01', 'T28_10', 'T28_06', 'T20_05', 'T20_09',

#> 'T20_30', 'T20_08', 'T20_12', 'T20_06', 'T20_07', 'T20_02', 'T20_13',

#> 'T20_03', 'T20_01', 'T20_04', 'T20_11', 'T20_10', 'T32_04', 'T31_03',

#> 'T31_01', 'T31_04', 'T32_03', 'T32_02', 'T32_01', 'T17_02', 'T17_09',

#> 'T17_10', 'T17_04', 'T17_03', 'T17_08', 'T17_06', 'T17_01'

#> Warning: Model split preparation for model No. 2, model name

#> domainlistening__group1.female_group2.countryA: 118 items from 134 items listed

#> the Q matrix not found in data:

#> ℹ 'T12_11', 'T13_15', 'T15_03', 'T13_04', 'T13_07', 'T13_16', 'T13_01',

#> 'T15_02', 'T15_01', 'T15_15', 'T15_16', 'T15_09', 'T13_12', 'T13_13',

#> 'T15_14', 'T13_08', 'T13_06', 'T15_12', 'T13_10', 'T13_17', 'T15_11',

#> 'T13_05', 'T13_09', 'T15_10', 'T15_13', 'T15_17', 'T15_18', 'T13_18',

#> 'T16_08', 'T16_09', 'T16_11', 'T16_13', 'T16_01', 'T16_04', 'T16_10',

#> 'T16_02', 'T16_12', 'T16_06', 'T16_07', 'T19_06', 'T19_08', 'T19_05',

#> 'T19_11', 'T19_01', 'T19_02', 'T19_30', 'T19_03', 'T19_07', 'T19_04',

#> 'T19_09', 'T21_11', 'T18_01', 'T21_01', 'T18_05', 'T21_12', 'T18_13',

#> 'T21_10', 'T18_11', 'T21_05', 'T21_13', 'T18_08', 'T18_07', 'T21_09',

#> 'T18_04', 'T18_02', 'T21_02', 'T21_06', 'T18_09', 'T21_04', 'T18_12',

#> 'T21_14', 'T21_03', 'T18_10', 'T30_06', 'T29_04', 'T29_02', 'T30_02',

#> 'T30_03', 'T30_01', 'T29_05', 'T29_03', 'T28_08', 'T28_09', 'T28_02',

#> 'T28_03', 'T28_04', 'T28_01', 'T28_10', 'T28_06', 'T20_05', 'T20_09',

#> 'T20_30', 'T20_08', 'T20_12', 'T20_06', 'T20_07', 'T20_02', 'T20_13',

#> 'T20_03', 'T20_01', 'T20_04', 'T20_11', 'T20_10', 'T32_04', 'T31_03',

#> 'T31_01', 'T31_04', 'T32_03', 'T32_02', 'T32_01', 'T17_02', 'T17_09',

#> 'T17_10', 'T17_04', 'T17_03', 'T17_08', 'T17_06', 'T17_01'

#> Warning: Model split preparation for model No. 3, model name

#> domainlistening__group1.female_group2.countryB: 118 items from 134 items listed

#> the Q matrix not found in data:

#> ℹ 'T12_11', 'T13_15', 'T15_03', 'T13_04', 'T13_07', 'T13_16', 'T13_01',

#> 'T15_02', 'T15_01', 'T15_15', 'T15_16', 'T15_09', 'T13_12', 'T13_13',

#> 'T15_14', 'T13_08', 'T13_06', 'T15_12', 'T13_10', 'T13_17', 'T15_11',

#> 'T13_05', 'T13_09', 'T15_10', 'T15_13', 'T15_17', 'T15_18', 'T13_18',

#> 'T16_08', 'T16_09', 'T16_11', 'T16_13', 'T16_01', 'T16_04', 'T16_10',

#> 'T16_02', 'T16_12', 'T16_06', 'T16_07', 'T19_06', 'T19_08', 'T19_05',

#> 'T19_11', 'T19_01', 'T19_02', 'T19_30', 'T19_03', 'T19_07', 'T19_04',

#> 'T19_09', 'T21_11', 'T18_01', 'T21_01', 'T18_05', 'T21_12', 'T18_13',

#> 'T21_10', 'T18_11', 'T21_05', 'T21_13', 'T18_08', 'T18_07', 'T21_09',

#> 'T18_04', 'T18_02', 'T21_02', 'T21_06', 'T18_09', 'T21_04', 'T18_12',

#> 'T21_14', 'T21_03', 'T18_10', 'T30_06', 'T29_04', 'T29_02', 'T30_02',

#> 'T30_03', 'T30_01', 'T29_05', 'T29_03', 'T28_08', 'T28_09', 'T28_02',

#> 'T28_03', 'T28_04', 'T28_01', 'T28_10', 'T28_06', 'T20_05', 'T20_09',

#> 'T20_30', 'T20_08', 'T20_12', 'T20_06', 'T20_07', 'T20_02', 'T20_13',

#> 'T20_03', 'T20_01', 'T20_04', 'T20_11', 'T20_10', 'T32_04', 'T31_03',

#> 'T31_01', 'T31_04', 'T32_03', 'T32_02', 'T32_01', 'T17_02', 'T17_09',

#> 'T17_10', 'T17_04', 'T17_03', 'T17_08', 'T17_06', 'T17_01'

#> Warning: Model split preparation for model No. 4, model name

#> domainlistening__group1.female_group2.all: 118 items from 134 items listed the

#> Q matrix not found in data:

#> ℹ 'T12_11', 'T13_15', 'T15_03', 'T13_04', 'T13_07', 'T13_16', 'T13_01',

#> 'T15_02', 'T15_01', 'T15_15', 'T15_16', 'T15_09', 'T13_12', 'T13_13',

#> 'T15_14', 'T13_08', 'T13_06', 'T15_12', 'T13_10', 'T13_17', 'T15_11',

#> 'T13_05', 'T13_09', 'T15_10', 'T15_13', 'T15_17', 'T15_18', 'T13_18',

#> 'T16_08', 'T16_09', 'T16_11', 'T16_13', 'T16_01', 'T16_04', 'T16_10',

#> 'T16_02', 'T16_12', 'T16_06', 'T16_07', 'T19_06', 'T19_08', 'T19_05',

#> 'T19_11', 'T19_01', 'T19_02', 'T19_30', 'T19_03', 'T19_07', 'T19_04',

#> 'T19_09', 'T21_11', 'T18_01', 'T21_01', 'T18_05', 'T21_12', 'T18_13',

#> 'T21_10', 'T18_11', 'T21_05', 'T21_13', 'T18_08', 'T18_07', 'T21_09',

#> 'T18_04', 'T18_02', 'T21_02', 'T21_06', 'T18_09', 'T21_04', 'T18_12',

#> 'T21_14', 'T21_03', 'T18_10', 'T30_06', 'T29_04', 'T29_02', 'T30_02',

#> 'T30_03', 'T30_01', 'T29_05', 'T29_03', 'T28_08', 'T28_09', 'T28_02',

#> 'T28_03', 'T28_04', 'T28_01', 'T28_10', 'T28_06', 'T20_05', 'T20_09',

#> 'T20_30', 'T20_08', 'T20_12', 'T20_06', 'T20_07', 'T20_02', 'T20_13',

#> 'T20_03', 'T20_01', 'T20_04', 'T20_11', 'T20_10', 'T32_04', 'T31_03',

#> 'T31_01', 'T31_04', 'T32_03', 'T32_02', 'T32_01', 'T17_02', 'T17_09',

#> 'T17_10', 'T17_04', 'T17_03', 'T17_08', 'T17_06', 'T17_01'

#> Warning: Model split preparation for model No. 5, model name

#> domainlistening__group1.male_group2.countryC: 118 items from 134 items listed

#> the Q matrix not found in data:

#> ℹ 'T12_11', 'T13_15', 'T15_03', 'T13_04', 'T13_07', 'T13_16', 'T13_01',

#> 'T15_02', 'T15_01', 'T15_15', 'T15_16', 'T15_09', 'T13_12', 'T13_13',

#> 'T15_14', 'T13_08', 'T13_06', 'T15_12', 'T13_10', 'T13_17', 'T15_11',

#> 'T13_05', 'T13_09', 'T15_10', 'T15_13', 'T15_17', 'T15_18', 'T13_18',

#> 'T16_08', 'T16_09', 'T16_11', 'T16_13', 'T16_01', 'T16_04', 'T16_10',

#> 'T16_02', 'T16_12', 'T16_06', 'T16_07', 'T19_06', 'T19_08', 'T19_05',

#> 'T19_11', 'T19_01', 'T19_02', 'T19_30', 'T19_03', 'T19_07', 'T19_04',

#> 'T19_09', 'T21_11', 'T18_01', 'T21_01', 'T18_05', 'T21_12', 'T18_13',

#> 'T21_10', 'T18_11', 'T21_05', 'T21_13', 'T18_08', 'T18_07', 'T21_09',

#> 'T18_04', 'T18_02', 'T21_02', 'T21_06', 'T18_09', 'T21_04', 'T18_12',

#> 'T21_14', 'T21_03', 'T18_10', 'T30_06', 'T29_04', 'T29_02', 'T30_02',

#> 'T30_03', 'T30_01', 'T29_05', 'T29_03', 'T28_08', 'T28_09', 'T28_02',

#> 'T28_03', 'T28_04', 'T28_01', 'T28_10', 'T28_06', 'T20_05', 'T20_09',

#> 'T20_30', 'T20_08', 'T20_12', 'T20_06', 'T20_07', 'T20_02', 'T20_13',

#> 'T20_03', 'T20_01', 'T20_04', 'T20_11', 'T20_10', 'T32_04', 'T31_03',

#> 'T31_01', 'T31_04', 'T32_03', 'T32_02', 'T32_01', 'T17_02', 'T17_09',

#> 'T17_10', 'T17_04', 'T17_03', 'T17_08', 'T17_06', 'T17_01'

#> Warning: Model split preparation for model No. 6, model name

#> domainlistening__group1.male_group2.countryA: 118 items from 134 items listed

#> the Q matrix not found in data:

#> ℹ 'T12_11', 'T13_15', 'T15_03', 'T13_04', 'T13_07', 'T13_16', 'T13_01',

#> 'T15_02', 'T15_01', 'T15_15', 'T15_16', 'T15_09', 'T13_12', 'T13_13',

#> 'T15_14', 'T13_08', 'T13_06', 'T15_12', 'T13_10', 'T13_17', 'T15_11',

#> 'T13_05', 'T13_09', 'T15_10', 'T15_13', 'T15_17', 'T15_18', 'T13_18',

#> 'T16_08', 'T16_09', 'T16_11', 'T16_13', 'T16_01', 'T16_04', 'T16_10',

#> 'T16_02', 'T16_12', 'T16_06', 'T16_07', 'T19_06', 'T19_08', 'T19_05',

#> 'T19_11', 'T19_01', 'T19_02', 'T19_30', 'T19_03', 'T19_07', 'T19_04',

#> 'T19_09', 'T21_11', 'T18_01', 'T21_01', 'T18_05', 'T21_12', 'T18_13',

#> 'T21_10', 'T18_11', 'T21_05', 'T21_13', 'T18_08', 'T18_07', 'T21_09',

#> 'T18_04', 'T18_02', 'T21_02', 'T21_06', 'T18_09', 'T21_04', 'T18_12',

#> 'T21_14', 'T21_03', 'T18_10', 'T30_06', 'T29_04', 'T29_02', 'T30_02',

#> 'T30_03', 'T30_01', 'T29_05', 'T29_03', 'T28_08', 'T28_09', 'T28_02',

#> 'T28_03', 'T28_04', 'T28_01', 'T28_10', 'T28_06', 'T20_05', 'T20_09',

#> 'T20_30', 'T20_08', 'T20_12', 'T20_06', 'T20_07', 'T20_02', 'T20_13',

#> 'T20_03', 'T20_01', 'T20_04', 'T20_11', 'T20_10', 'T32_04', 'T31_03',

#> 'T31_01', 'T31_04', 'T32_03', 'T32_02', 'T32_01', 'T17_02', 'T17_09',

#> 'T17_10', 'T17_04', 'T17_03', 'T17_08', 'T17_06', 'T17_01'

#> Warning: Model split preparation for model No. 7, model name

#> domainlistening__group1.male_group2.countryB: 118 items from 134 items listed

#> the Q matrix not found in data:

#> ℹ 'T12_11', 'T13_15', 'T15_03', 'T13_04', 'T13_07', 'T13_16', 'T13_01',

#> 'T15_02', 'T15_01', 'T15_15', 'T15_16', 'T15_09', 'T13_12', 'T13_13',

#> 'T15_14', 'T13_08', 'T13_06', 'T15_12', 'T13_10', 'T13_17', 'T15_11',

#> 'T13_05', 'T13_09', 'T15_10', 'T15_13', 'T15_17', 'T15_18', 'T13_18',

#> 'T16_08', 'T16_09', 'T16_11', 'T16_13', 'T16_01', 'T16_04', 'T16_10',

#> 'T16_02', 'T16_12', 'T16_06', 'T16_07', 'T19_06', 'T19_08', 'T19_05',

#> 'T19_11', 'T19_01', 'T19_02', 'T19_30', 'T19_03', 'T19_07', 'T19_04',

#> 'T19_09', 'T21_11', 'T18_01', 'T21_01', 'T18_05', 'T21_12', 'T18_13',

#> 'T21_10', 'T18_11', 'T21_05', 'T21_13', 'T18_08', 'T18_07', 'T21_09',

#> 'T18_04', 'T18_02', 'T21_02', 'T21_06', 'T18_09', 'T21_04', 'T18_12',

#> 'T21_14', 'T21_03', 'T18_10', 'T30_06', 'T29_04', 'T29_02', 'T30_02',

#> 'T30_03', 'T30_01', 'T29_05', 'T29_03', 'T28_08', 'T28_09', 'T28_02',

#> 'T28_03', 'T28_04', 'T28_01', 'T28_10', 'T28_06', 'T20_05', 'T20_09',

#> 'T20_30', 'T20_08', 'T20_12', 'T20_06', 'T20_07', 'T20_02', 'T20_13',

#> 'T20_03', 'T20_01', 'T20_04', 'T20_11', 'T20_10', 'T32_04', 'T31_03',

#> 'T31_01', 'T31_04', 'T32_03', 'T32_02', 'T32_01', 'T17_02', 'T17_09',

#> 'T17_10', 'T17_04', 'T17_03', 'T17_08', 'T17_06', 'T17_01'

#> Warning: Model split preparation for model No. 8, model name

#> domainlistening__group1.male_group2.all: 118 items from 134 items listed the Q

#> matrix not found in data:

#> ℹ 'T12_11', 'T13_15', 'T15_03', 'T13_04', 'T13_07', 'T13_16', 'T13_01',

#> 'T15_02', 'T15_01', 'T15_15', 'T15_16', 'T15_09', 'T13_12', 'T13_13',

#> 'T15_14', 'T13_08', 'T13_06', 'T15_12', 'T13_10', 'T13_17', 'T15_11',

#> 'T13_05', 'T13_09', 'T15_10', 'T15_13', 'T15_17', 'T15_18', 'T13_18',

#> 'T16_08', 'T16_09', 'T16_11', 'T16_13', 'T16_01', 'T16_04', 'T16_10',

#> 'T16_02', 'T16_12', 'T16_06', 'T16_07', 'T19_06', 'T19_08', 'T19_05',

#> 'T19_11', 'T19_01', 'T19_02', 'T19_30', 'T19_03', 'T19_07', 'T19_04',

#> 'T19_09', 'T21_11', 'T18_01', 'T21_01', 'T18_05', 'T21_12', 'T18_13',

#> 'T21_10', 'T18_11', 'T21_05', 'T21_13', 'T18_08', 'T18_07', 'T21_09',

#> 'T18_04', 'T18_02', 'T21_02', 'T21_06', 'T18_09', 'T21_04', 'T18_12',

#> 'T21_14', 'T21_03', 'T18_10', 'T30_06', 'T29_04', 'T29_02', 'T30_02',

#> 'T30_03', 'T30_01', 'T29_05', 'T29_03', 'T28_08', 'T28_09', 'T28_02',

#> 'T28_03', 'T28_04', 'T28_01', 'T28_10', 'T28_06', 'T20_05', 'T20_09',

#> 'T20_30', 'T20_08', 'T20_12', 'T20_06', 'T20_07', 'T20_02', 'T20_13',

#> 'T20_03', 'T20_01', 'T20_04', 'T20_11', 'T20_10', 'T32_04', 'T31_03',

#> 'T31_01', 'T31_04', 'T32_03', 'T32_02', 'T32_01', 'T17_02', 'T17_09',

#> 'T17_10', 'T17_04', 'T17_03', 'T17_08', 'T17_06', 'T17_01'

#> Warning: Model split preparation for model No. 9, model name

#> domainlistening__group1.all_group2.countryC: 118 items from 134 items listed

#> the Q matrix not found in data:

#> ℹ 'T12_11', 'T13_15', 'T15_03', 'T13_04', 'T13_07', 'T13_16', 'T13_01',

#> 'T15_02', 'T15_01', 'T15_15', 'T15_16', 'T15_09', 'T13_12', 'T13_13',

#> 'T15_14', 'T13_08', 'T13_06', 'T15_12', 'T13_10', 'T13_17', 'T15_11',

#> 'T13_05', 'T13_09', 'T15_10', 'T15_13', 'T15_17', 'T15_18', 'T13_18',

#> 'T16_08', 'T16_09', 'T16_11', 'T16_13', 'T16_01', 'T16_04', 'T16_10',

#> 'T16_02', 'T16_12', 'T16_06', 'T16_07', 'T19_06', 'T19_08', 'T19_05',

#> 'T19_11', 'T19_01', 'T19_02', 'T19_30', 'T19_03', 'T19_07', 'T19_04',

#> 'T19_09', 'T21_11', 'T18_01', 'T21_01', 'T18_05', 'T21_12', 'T18_13',

#> 'T21_10', 'T18_11', 'T21_05', 'T21_13', 'T18_08', 'T18_07', 'T21_09',

#> 'T18_04', 'T18_02', 'T21_02', 'T21_06', 'T18_09', 'T21_04', 'T18_12',

#> 'T21_14', 'T21_03', 'T18_10', 'T30_06', 'T29_04', 'T29_02', 'T30_02',

#> 'T30_03', 'T30_01', 'T29_05', 'T29_03', 'T28_08', 'T28_09', 'T28_02',

#> 'T28_03', 'T28_04', 'T28_01', 'T28_10', 'T28_06', 'T20_05', 'T20_09',

#> 'T20_30', 'T20_08', 'T20_12', 'T20_06', 'T20_07', 'T20_02', 'T20_13',

#> 'T20_03', 'T20_01', 'T20_04', 'T20_11', 'T20_10', 'T32_04', 'T31_03',

#> 'T31_01', 'T31_04', 'T32_03', 'T32_02', 'T32_01', 'T17_02', 'T17_09',

#> 'T17_10', 'T17_04', 'T17_03', 'T17_08', 'T17_06', 'T17_01'

#> Warning: Model split preparation for model No. 10, model name

#> domainlistening__group1.all_group2.countryA: 118 items from 134 items listed

#> the Q matrix not found in data:

#> ℹ 'T12_11', 'T13_15', 'T15_03', 'T13_04', 'T13_07', 'T13_16', 'T13_01',

#> 'T15_02', 'T15_01', 'T15_15', 'T15_16', 'T15_09', 'T13_12', 'T13_13',

#> 'T15_14', 'T13_08', 'T13_06', 'T15_12', 'T13_10', 'T13_17', 'T15_11',

#> 'T13_05', 'T13_09', 'T15_10', 'T15_13', 'T15_17', 'T15_18', 'T13_18',

#> 'T16_08', 'T16_09', 'T16_11', 'T16_13', 'T16_01', 'T16_04', 'T16_10',

#> 'T16_02', 'T16_12', 'T16_06', 'T16_07', 'T19_06', 'T19_08', 'T19_05',

#> 'T19_11', 'T19_01', 'T19_02', 'T19_30', 'T19_03', 'T19_07', 'T19_04',

#> 'T19_09', 'T21_11', 'T18_01', 'T21_01', 'T18_05', 'T21_12', 'T18_13',

#> 'T21_10', 'T18_11', 'T21_05', 'T21_13', 'T18_08', 'T18_07', 'T21_09',

#> 'T18_04', 'T18_02', 'T21_02', 'T21_06', 'T18_09', 'T21_04', 'T18_12',

#> 'T21_14', 'T21_03', 'T18_10', 'T30_06', 'T29_04', 'T29_02', 'T30_02',

#> 'T30_03', 'T30_01', 'T29_05', 'T29_03', 'T28_08', 'T28_09', 'T28_02',

#> 'T28_03', 'T28_04', 'T28_01', 'T28_10', 'T28_06', 'T20_05', 'T20_09',

#> 'T20_30', 'T20_08', 'T20_12', 'T20_06', 'T20_07', 'T20_02', 'T20_13',

#> 'T20_03', 'T20_01', 'T20_04', 'T20_11', 'T20_10', 'T32_04', 'T31_03',

#> 'T31_01', 'T31_04', 'T32_03', 'T32_02', 'T32_01', 'T17_02', 'T17_09',

#> 'T17_10', 'T17_04', 'T17_03', 'T17_08', 'T17_06', 'T17_01'

#> Warning: Model split preparation for model No. 11, model name

#> domainlistening__group1.all_group2.countryB: 118 items from 134 items listed

#> the Q matrix not found in data:

#> ℹ 'T12_11', 'T13_15', 'T15_03', 'T13_04', 'T13_07', 'T13_16', 'T13_01',

#> 'T15_02', 'T15_01', 'T15_15', 'T15_16', 'T15_09', 'T13_12', 'T13_13',

#> 'T15_14', 'T13_08', 'T13_06', 'T15_12', 'T13_10', 'T13_17', 'T15_11',

#> 'T13_05', 'T13_09', 'T15_10', 'T15_13', 'T15_17', 'T15_18', 'T13_18',

#> 'T16_08', 'T16_09', 'T16_11', 'T16_13', 'T16_01', 'T16_04', 'T16_10',

#> 'T16_02', 'T16_12', 'T16_06', 'T16_07', 'T19_06', 'T19_08', 'T19_05',

#> 'T19_11', 'T19_01', 'T19_02', 'T19_30', 'T19_03', 'T19_07', 'T19_04',

#> 'T19_09', 'T21_11', 'T18_01', 'T21_01', 'T18_05', 'T21_12', 'T18_13',

#> 'T21_10', 'T18_11', 'T21_05', 'T21_13', 'T18_08', 'T18_07', 'T21_09',

#> 'T18_04', 'T18_02', 'T21_02', 'T21_06', 'T18_09', 'T21_04', 'T18_12',

#> 'T21_14', 'T21_03', 'T18_10', 'T30_06', 'T29_04', 'T29_02', 'T30_02',

#> 'T30_03', 'T30_01', 'T29_05', 'T29_03', 'T28_08', 'T28_09', 'T28_02',

#> 'T28_03', 'T28_04', 'T28_01', 'T28_10', 'T28_06', 'T20_05', 'T20_09',

#> 'T20_30', 'T20_08', 'T20_12', 'T20_06', 'T20_07', 'T20_02', 'T20_13',

#> 'T20_03', 'T20_01', 'T20_04', 'T20_11', 'T20_10', 'T32_04', 'T31_03',

#> 'T31_01', 'T31_04', 'T32_03', 'T32_02', 'T32_01', 'T17_02', 'T17_09',

#> 'T17_10', 'T17_04', 'T17_03', 'T17_08', 'T17_06', 'T17_01'

#> Warning: Model split preparation for model No. 12, model name

#> domainlistening__group1.all_group2.all: 118 items from 134 items listed the Q

#> matrix not found in data:

#> ℹ 'T12_11', 'T13_15', 'T15_03', 'T13_04', 'T13_07', 'T13_16', 'T13_01',

#> 'T15_02', 'T15_01', 'T15_15', 'T15_16', 'T15_09', 'T13_12', 'T13_13',

#> 'T15_14', 'T13_08', 'T13_06', 'T15_12', 'T13_10', 'T13_17', 'T15_11',

#> 'T13_05', 'T13_09', 'T15_10', 'T15_13', 'T15_17', 'T15_18', 'T13_18',

#> 'T16_08', 'T16_09', 'T16_11', 'T16_13', 'T16_01', 'T16_04', 'T16_10',

#> 'T16_02', 'T16_12', 'T16_06', 'T16_07', 'T19_06', 'T19_08', 'T19_05',

#> 'T19_11', 'T19_01', 'T19_02', 'T19_30', 'T19_03', 'T19_07', 'T19_04',

#> 'T19_09', 'T21_11', 'T18_01', 'T21_01', 'T18_05', 'T21_12', 'T18_13',

#> 'T21_10', 'T18_11', 'T21_05', 'T21_13', 'T18_08', 'T18_07', 'T21_09',

#> 'T18_04', 'T18_02', 'T21_02', 'T21_06', 'T18_09', 'T21_04', 'T18_12',

#> 'T21_14', 'T21_03', 'T18_10', 'T30_06', 'T29_04', 'T29_02', 'T30_02',

#> 'T30_03', 'T30_01', 'T29_05', 'T29_03', 'T28_08', 'T28_09', 'T28_02',

#> 'T28_03', 'T28_04', 'T28_01', 'T28_10', 'T28_06', 'T20_05', 'T20_09',

#> 'T20_30', 'T20_08', 'T20_12', 'T20_06', 'T20_07', 'T20_02', 'T20_13',

#> 'T20_03', 'T20_01', 'T20_04', 'T20_11', 'T20_10', 'T32_04', 'T31_03',

#> 'T31_01', 'T31_04', 'T32_03', 'T32_02', 'T32_01', 'T17_02', 'T17_09',

#> 'T17_10', 'T17_04', 'T17_03', 'T17_08', 'T17_06', 'T17_01'

#> Warning: Model split preparation for model No. 13, model name

#> domainreading__group1.female_group2.countryC: 132 items from 149 items listed

#> the Q matrix not found in data:

#> ℹ 'T07_08', 'T02_02', 'T07_01', 'T02_06', 'T07_05', 'T07_06', 'T02_07',

#> 'T07_02', 'T07_04', 'T02_03', 'T07_09', 'T02_01', 'T02_04', 'T07_03',

#> 'T02_05', 'T07_07', 'T07_10', 'T09_12', 'T01_08', 'T03_07', 'T03_01',

#> 'T03_08', 'T03_05', 'T03_06', 'T03_03', 'T10_08', 'T11_06', 'T11_03',

#> 'T11_01', 'T04_02', 'T04_01', 'T04_06', 'T04_04', 'T11_04', 'T10_01',

#> 'T11_08', 'T10_02', 'T10_09', 'T10_07', 'T11_02', 'T04_05', 'T04_03',

#> 'T11_05', 'T10_06', 'T11_10', 'T11_09', 'T04_07', 'T10_10', 'T04_09',

#> 'T04_08', 'T11_0X', 'T06_05', 'T05_03', 'T05_06', 'T05_05', 'T06_02',

#> 'T06_04', 'T06_03', 'T05_04', 'T05_02', 'T05_01', 'T06_01', 'T08_04',

#> 'T08_06', 'T08_03', 'T08_07', 'T08_02', 'T08_05', 'T08_01', 'T08_09',

#> 'T08_08', 'T03_09', 'T06_1X', 'T26_03', 'T26_05', 'T26_07', 'T26_09',

#> 'T26_06', 'T26_10', 'T26_08', 'T26_02', 'T26_04', 'T27_01', 'T27_05',

#> 'T27_07', 'T27_06', 'T27_04', 'T27_02', 'T27_03', 'T24_05', 'T24_08',

#> 'T24_02', 'T24_10', 'T24_06', 'T24_24', 'T24_25', 'T24_09', 'T24_21',

#> 'T24_23', 'T23_10', 'T23_13', 'T23_09', 'T23_15', 'T23_03', 'T23_04',

#> 'T23_14', 'T23_07', 'T23_08', 'T23_02', 'T23_06', 'T23_05', 'T25_05',

#> 'T25_22', 'T22_03', 'T25_06', 'T22_12', 'T25_09', 'T25_10', 'T25_08',

#> 'T22_11', 'T22_02', 'T25_11', 'T25_07', 'T25_14', 'T25_12', 'T25_04',

#> 'T22_08', 'T25_23', 'T22_10', 'T22_06', 'T25_13', 'T22_01'

#> Warning: Model split preparation for model No. 14, model name

#> domainreading__group1.female_group2.countryA: 132 items from 149 items listed

#> the Q matrix not found in data:

#> ℹ 'T07_08', 'T02_02', 'T07_01', 'T02_06', 'T07_05', 'T07_06', 'T02_07',

#> 'T07_02', 'T07_04', 'T02_03', 'T07_09', 'T02_01', 'T02_04', 'T07_03',

#> 'T02_05', 'T07_07', 'T07_10', 'T09_12', 'T01_08', 'T03_07', 'T03_01',

#> 'T03_08', 'T03_05', 'T03_06', 'T03_03', 'T10_08', 'T11_06', 'T11_03',

#> 'T11_01', 'T04_02', 'T04_01', 'T04_06', 'T04_04', 'T11_04', 'T10_01',

#> 'T11_08', 'T10_02', 'T10_09', 'T10_07', 'T11_02', 'T04_05', 'T04_03',

#> 'T11_05', 'T10_06', 'T11_10', 'T11_09', 'T04_07', 'T10_10', 'T04_09',

#> 'T04_08', 'T11_0X', 'T06_05', 'T05_03', 'T05_06', 'T05_05', 'T06_02',

#> 'T06_04', 'T06_03', 'T05_04', 'T05_02', 'T05_01', 'T06_01', 'T08_04',

#> 'T08_06', 'T08_03', 'T08_07', 'T08_02', 'T08_05', 'T08_01', 'T08_09',

#> 'T08_08', 'T03_09', 'T06_1X', 'T26_03', 'T26_05', 'T26_07', 'T26_09',

#> 'T26_06', 'T26_10', 'T26_08', 'T26_02', 'T26_04', 'T27_01', 'T27_05',

#> 'T27_07', 'T27_06', 'T27_04', 'T27_02', 'T27_03', 'T24_05', 'T24_08',

#> 'T24_02', 'T24_10', 'T24_06', 'T24_24', 'T24_25', 'T24_09', 'T24_21',

#> 'T24_23', 'T23_10', 'T23_13', 'T23_09', 'T23_15', 'T23_03', 'T23_04',

#> 'T23_14', 'T23_07', 'T23_08', 'T23_02', 'T23_06', 'T23_05', 'T25_05',

#> 'T25_22', 'T22_03', 'T25_06', 'T22_12', 'T25_09', 'T25_10', 'T25_08',

#> 'T22_11', 'T22_02', 'T25_11', 'T25_07', 'T25_14', 'T25_12', 'T25_04',

#> 'T22_08', 'T25_23', 'T22_10', 'T22_06', 'T25_13', 'T22_01'

#> Warning: Model split preparation for model No. 15, model name

#> domainreading__group1.female_group2.countryB: 132 items from 149 items listed

#> the Q matrix not found in data:

#> ℹ 'T07_08', 'T02_02', 'T07_01', 'T02_06', 'T07_05', 'T07_06', 'T02_07',

#> 'T07_02', 'T07_04', 'T02_03', 'T07_09', 'T02_01', 'T02_04', 'T07_03',

#> 'T02_05', 'T07_07', 'T07_10', 'T09_12', 'T01_08', 'T03_07', 'T03_01',

#> 'T03_08', 'T03_05', 'T03_06', 'T03_03', 'T10_08', 'T11_06', 'T11_03',

#> 'T11_01', 'T04_02', 'T04_01', 'T04_06', 'T04_04', 'T11_04', 'T10_01',

#> 'T11_08', 'T10_02', 'T10_09', 'T10_07', 'T11_02', 'T04_05', 'T04_03',

#> 'T11_05', 'T10_06', 'T11_10', 'T11_09', 'T04_07', 'T10_10', 'T04_09',

#> 'T04_08', 'T11_0X', 'T06_05', 'T05_03', 'T05_06', 'T05_05', 'T06_02',

#> 'T06_04', 'T06_03', 'T05_04', 'T05_02', 'T05_01', 'T06_01', 'T08_04',

#> 'T08_06', 'T08_03', 'T08_07', 'T08_02', 'T08_05', 'T08_01', 'T08_09',

#> 'T08_08', 'T03_09', 'T06_1X', 'T26_03', 'T26_05', 'T26_07', 'T26_09',

#> 'T26_06', 'T26_10', 'T26_08', 'T26_02', 'T26_04', 'T27_01', 'T27_05',

#> 'T27_07', 'T27_06', 'T27_04', 'T27_02', 'T27_03', 'T24_05', 'T24_08',

#> 'T24_02', 'T24_10', 'T24_06', 'T24_24', 'T24_25', 'T24_09', 'T24_21',

#> 'T24_23', 'T23_10', 'T23_13', 'T23_09', 'T23_15', 'T23_03', 'T23_04',

#> 'T23_14', 'T23_07', 'T23_08', 'T23_02', 'T23_06', 'T23_05', 'T25_05',

#> 'T25_22', 'T22_03', 'T25_06', 'T22_12', 'T25_09', 'T25_10', 'T25_08',

#> 'T22_11', 'T22_02', 'T25_11', 'T25_07', 'T25_14', 'T25_12', 'T25_04',

#> 'T22_08', 'T25_23', 'T22_10', 'T22_06', 'T25_13', 'T22_01'

#> Warning: Model split preparation for model No. 16, model name

#> domainreading__group1.female_group2.all: 132 items from 149 items listed the Q

#> matrix not found in data:

#> ℹ 'T07_08', 'T02_02', 'T07_01', 'T02_06', 'T07_05', 'T07_06', 'T02_07',

#> 'T07_02', 'T07_04', 'T02_03', 'T07_09', 'T02_01', 'T02_04', 'T07_03',

#> 'T02_05', 'T07_07', 'T07_10', 'T09_12', 'T01_08', 'T03_07', 'T03_01',

#> 'T03_08', 'T03_05', 'T03_06', 'T03_03', 'T10_08', 'T11_06', 'T11_03',

#> 'T11_01', 'T04_02', 'T04_01', 'T04_06', 'T04_04', 'T11_04', 'T10_01',

#> 'T11_08', 'T10_02', 'T10_09', 'T10_07', 'T11_02', 'T04_05', 'T04_03',

#> 'T11_05', 'T10_06', 'T11_10', 'T11_09', 'T04_07', 'T10_10', 'T04_09',

#> 'T04_08', 'T11_0X', 'T06_05', 'T05_03', 'T05_06', 'T05_05', 'T06_02',

#> 'T06_04', 'T06_03', 'T05_04', 'T05_02', 'T05_01', 'T06_01', 'T08_04',

#> 'T08_06', 'T08_03', 'T08_07', 'T08_02', 'T08_05', 'T08_01', 'T08_09',

#> 'T08_08', 'T03_09', 'T06_1X', 'T26_03', 'T26_05', 'T26_07', 'T26_09',

#> 'T26_06', 'T26_10', 'T26_08', 'T26_02', 'T26_04', 'T27_01', 'T27_05',

#> 'T27_07', 'T27_06', 'T27_04', 'T27_02', 'T27_03', 'T24_05', 'T24_08',

#> 'T24_02', 'T24_10', 'T24_06', 'T24_24', 'T24_25', 'T24_09', 'T24_21',

#> 'T24_23', 'T23_10', 'T23_13', 'T23_09', 'T23_15', 'T23_03', 'T23_04',

#> 'T23_14', 'T23_07', 'T23_08', 'T23_02', 'T23_06', 'T23_05', 'T25_05',

#> 'T25_22', 'T22_03', 'T25_06', 'T22_12', 'T25_09', 'T25_10', 'T25_08',

#> 'T22_11', 'T22_02', 'T25_11', 'T25_07', 'T25_14', 'T25_12', 'T25_04',

#> 'T22_08', 'T25_23', 'T22_10', 'T22_06', 'T25_13', 'T22_01'

#> Warning: Model split preparation for model No. 17, model name

#> domainreading__group1.male_group2.countryC: 132 items from 149 items listed the

#> Q matrix not found in data:

#> ℹ 'T07_08', 'T02_02', 'T07_01', 'T02_06', 'T07_05', 'T07_06', 'T02_07',

#> 'T07_02', 'T07_04', 'T02_03', 'T07_09', 'T02_01', 'T02_04', 'T07_03',

#> 'T02_05', 'T07_07', 'T07_10', 'T09_12', 'T01_08', 'T03_07', 'T03_01',

#> 'T03_08', 'T03_05', 'T03_06', 'T03_03', 'T10_08', 'T11_06', 'T11_03',

#> 'T11_01', 'T04_02', 'T04_01', 'T04_06', 'T04_04', 'T11_04', 'T10_01',

#> 'T11_08', 'T10_02', 'T10_09', 'T10_07', 'T11_02', 'T04_05', 'T04_03',

#> 'T11_05', 'T10_06', 'T11_10', 'T11_09', 'T04_07', 'T10_10', 'T04_09',

#> 'T04_08', 'T11_0X', 'T06_05', 'T05_03', 'T05_06', 'T05_05', 'T06_02',

#> 'T06_04', 'T06_03', 'T05_04', 'T05_02', 'T05_01', 'T06_01', 'T08_04',

#> 'T08_06', 'T08_03', 'T08_07', 'T08_02', 'T08_05', 'T08_01', 'T08_09',

#> 'T08_08', 'T03_09', 'T06_1X', 'T26_03', 'T26_05', 'T26_07', 'T26_09',

#> 'T26_06', 'T26_10', 'T26_08', 'T26_02', 'T26_04', 'T27_01', 'T27_05',

#> 'T27_07', 'T27_06', 'T27_04', 'T27_02', 'T27_03', 'T24_05', 'T24_08',

#> 'T24_02', 'T24_10', 'T24_06', 'T24_24', 'T24_25', 'T24_09', 'T24_21',

#> 'T24_23', 'T23_10', 'T23_13', 'T23_09', 'T23_15', 'T23_03', 'T23_04',

#> 'T23_14', 'T23_07', 'T23_08', 'T23_02', 'T23_06', 'T23_05', 'T25_05',

#> 'T25_22', 'T22_03', 'T25_06', 'T22_12', 'T25_09', 'T25_10', 'T25_08',

#> 'T22_11', 'T22_02', 'T25_11', 'T25_07', 'T25_14', 'T25_12', 'T25_04',

#> 'T22_08', 'T25_23', 'T22_10', 'T22_06', 'T25_13', 'T22_01'

#> Warning: Model split preparation for model No. 18, model name

#> domainreading__group1.male_group2.countryA: 132 items from 149 items listed the

#> Q matrix not found in data:

#> ℹ 'T07_08', 'T02_02', 'T07_01', 'T02_06', 'T07_05', 'T07_06', 'T02_07',

#> 'T07_02', 'T07_04', 'T02_03', 'T07_09', 'T02_01', 'T02_04', 'T07_03',

#> 'T02_05', 'T07_07', 'T07_10', 'T09_12', 'T01_08', 'T03_07', 'T03_01',

#> 'T03_08', 'T03_05', 'T03_06', 'T03_03', 'T10_08', 'T11_06', 'T11_03',

#> 'T11_01', 'T04_02', 'T04_01', 'T04_06', 'T04_04', 'T11_04', 'T10_01',

#> 'T11_08', 'T10_02', 'T10_09', 'T10_07', 'T11_02', 'T04_05', 'T04_03',

#> 'T11_05', 'T10_06', 'T11_10', 'T11_09', 'T04_07', 'T10_10', 'T04_09',

#> 'T04_08', 'T11_0X', 'T06_05', 'T05_03', 'T05_06', 'T05_05', 'T06_02',

#> 'T06_04', 'T06_03', 'T05_04', 'T05_02', 'T05_01', 'T06_01', 'T08_04',

#> 'T08_06', 'T08_03', 'T08_07', 'T08_02', 'T08_05', 'T08_01', 'T08_09',

#> 'T08_08', 'T03_09', 'T06_1X', 'T26_03', 'T26_05', 'T26_07', 'T26_09',

#> 'T26_06', 'T26_10', 'T26_08', 'T26_02', 'T26_04', 'T27_01', 'T27_05',

#> 'T27_07', 'T27_06', 'T27_04', 'T27_02', 'T27_03', 'T24_05', 'T24_08',

#> 'T24_02', 'T24_10', 'T24_06', 'T24_24', 'T24_25', 'T24_09', 'T24_21',

#> 'T24_23', 'T23_10', 'T23_13', 'T23_09', 'T23_15', 'T23_03', 'T23_04',

#> 'T23_14', 'T23_07', 'T23_08', 'T23_02', 'T23_06', 'T23_05', 'T25_05',

#> 'T25_22', 'T22_03', 'T25_06', 'T22_12', 'T25_09', 'T25_10', 'T25_08',

#> 'T22_11', 'T22_02', 'T25_11', 'T25_07', 'T25_14', 'T25_12', 'T25_04',

#> 'T22_08', 'T25_23', 'T22_10', 'T22_06', 'T25_13', 'T22_01'

#> Warning: Model split preparation for model No. 19, model name

#> domainreading__group1.male_group2.countryB: 132 items from 149 items listed the

#> Q matrix not found in data:

#> ℹ 'T07_08', 'T02_02', 'T07_01', 'T02_06', 'T07_05', 'T07_06', 'T02_07',

#> 'T07_02', 'T07_04', 'T02_03', 'T07_09', 'T02_01', 'T02_04', 'T07_03',

#> 'T02_05', 'T07_07', 'T07_10', 'T09_12', 'T01_08', 'T03_07', 'T03_01',

#> 'T03_08', 'T03_05', 'T03_06', 'T03_03', 'T10_08', 'T11_06', 'T11_03',

#> 'T11_01', 'T04_02', 'T04_01', 'T04_06', 'T04_04', 'T11_04', 'T10_01',

#> 'T11_08', 'T10_02', 'T10_09', 'T10_07', 'T11_02', 'T04_05', 'T04_03',

#> 'T11_05', 'T10_06', 'T11_10', 'T11_09', 'T04_07', 'T10_10', 'T04_09',

#> 'T04_08', 'T11_0X', 'T06_05', 'T05_03', 'T05_06', 'T05_05', 'T06_02',

#> 'T06_04', 'T06_03', 'T05_04', 'T05_02', 'T05_01', 'T06_01', 'T08_04',

#> 'T08_06', 'T08_03', 'T08_07', 'T08_02', 'T08_05', 'T08_01', 'T08_09',

#> 'T08_08', 'T03_09', 'T06_1X', 'T26_03', 'T26_05', 'T26_07', 'T26_09',

#> 'T26_06', 'T26_10', 'T26_08', 'T26_02', 'T26_04', 'T27_01', 'T27_05',

#> 'T27_07', 'T27_06', 'T27_04', 'T27_02', 'T27_03', 'T24_05', 'T24_08',

#> 'T24_02', 'T24_10', 'T24_06', 'T24_24', 'T24_25', 'T24_09', 'T24_21',

#> 'T24_23', 'T23_10', 'T23_13', 'T23_09', 'T23_15', 'T23_03', 'T23_04',

#> 'T23_14', 'T23_07', 'T23_08', 'T23_02', 'T23_06', 'T23_05', 'T25_05',

#> 'T25_22', 'T22_03', 'T25_06', 'T22_12', 'T25_09', 'T25_10', 'T25_08',

#> 'T22_11', 'T22_02', 'T25_11', 'T25_07', 'T25_14', 'T25_12', 'T25_04',

#> 'T22_08', 'T25_23', 'T22_10', 'T22_06', 'T25_13', 'T22_01'

#> Warning: Model split preparation for model No. 20, model name

#> domainreading__group1.male_group2.all: 132 items from 149 items listed the Q

#> matrix not found in data:

#> ℹ 'T07_08', 'T02_02', 'T07_01', 'T02_06', 'T07_05', 'T07_06', 'T02_07',

#> 'T07_02', 'T07_04', 'T02_03', 'T07_09', 'T02_01', 'T02_04', 'T07_03',

#> 'T02_05', 'T07_07', 'T07_10', 'T09_12', 'T01_08', 'T03_07', 'T03_01',

#> 'T03_08', 'T03_05', 'T03_06', 'T03_03', 'T10_08', 'T11_06', 'T11_03',

#> 'T11_01', 'T04_02', 'T04_01', 'T04_06', 'T04_04', 'T11_04', 'T10_01',

#> 'T11_08', 'T10_02', 'T10_09', 'T10_07', 'T11_02', 'T04_05', 'T04_03',

#> 'T11_05', 'T10_06', 'T11_10', 'T11_09', 'T04_07', 'T10_10', 'T04_09',

#> 'T04_08', 'T11_0X', 'T06_05', 'T05_03', 'T05_06', 'T05_05', 'T06_02',

#> 'T06_04', 'T06_03', 'T05_04', 'T05_02', 'T05_01', 'T06_01', 'T08_04',

#> 'T08_06', 'T08_03', 'T08_07', 'T08_02', 'T08_05', 'T08_01', 'T08_09',

#> 'T08_08', 'T03_09', 'T06_1X', 'T26_03', 'T26_05', 'T26_07', 'T26_09',

#> 'T26_06', 'T26_10', 'T26_08', 'T26_02', 'T26_04', 'T27_01', 'T27_05',

#> 'T27_07', 'T27_06', 'T27_04', 'T27_02', 'T27_03', 'T24_05', 'T24_08',

#> 'T24_02', 'T24_10', 'T24_06', 'T24_24', 'T24_25', 'T24_09', 'T24_21',

#> 'T24_23', 'T23_10', 'T23_13', 'T23_09', 'T23_15', 'T23_03', 'T23_04',

#> 'T23_14', 'T23_07', 'T23_08', 'T23_02', 'T23_06', 'T23_05', 'T25_05',

#> 'T25_22', 'T22_03', 'T25_06', 'T22_12', 'T25_09', 'T25_10', 'T25_08',

#> 'T22_11', 'T22_02', 'T25_11', 'T25_07', 'T25_14', 'T25_12', 'T25_04',

#> 'T22_08', 'T25_23', 'T22_10', 'T22_06', 'T25_13', 'T22_01'

#> Warning: Model split preparation for model No. 21, model name

#> domainreading__group1.all_group2.countryC: 132 items from 149 items listed the

#> Q matrix not found in data:

#> ℹ 'T07_08', 'T02_02', 'T07_01', 'T02_06', 'T07_05', 'T07_06', 'T02_07',

#> 'T07_02', 'T07_04', 'T02_03', 'T07_09', 'T02_01', 'T02_04', 'T07_03',

#> 'T02_05', 'T07_07', 'T07_10', 'T09_12', 'T01_08', 'T03_07', 'T03_01',

#> 'T03_08', 'T03_05', 'T03_06', 'T03_03', 'T10_08', 'T11_06', 'T11_03',

#> 'T11_01', 'T04_02', 'T04_01', 'T04_06', 'T04_04', 'T11_04', 'T10_01',

#> 'T11_08', 'T10_02', 'T10_09', 'T10_07', 'T11_02', 'T04_05', 'T04_03',

#> 'T11_05', 'T10_06', 'T11_10', 'T11_09', 'T04_07', 'T10_10', 'T04_09',

#> 'T04_08', 'T11_0X', 'T06_05', 'T05_03', 'T05_06', 'T05_05', 'T06_02',

#> 'T06_04', 'T06_03', 'T05_04', 'T05_02', 'T05_01', 'T06_01', 'T08_04',

#> 'T08_06', 'T08_03', 'T08_07', 'T08_02', 'T08_05', 'T08_01', 'T08_09',

#> 'T08_08', 'T03_09', 'T06_1X', 'T26_03', 'T26_05', 'T26_07', 'T26_09',

#> 'T26_06', 'T26_10', 'T26_08', 'T26_02', 'T26_04', 'T27_01', 'T27_05',

#> 'T27_07', 'T27_06', 'T27_04', 'T27_02', 'T27_03', 'T24_05', 'T24_08',

#> 'T24_02', 'T24_10', 'T24_06', 'T24_24', 'T24_25', 'T24_09', 'T24_21',

#> 'T24_23', 'T23_10', 'T23_13', 'T23_09', 'T23_15', 'T23_03', 'T23_04',

#> 'T23_14', 'T23_07', 'T23_08', 'T23_02', 'T23_06', 'T23_05', 'T25_05',

#> 'T25_22', 'T22_03', 'T25_06', 'T22_12', 'T25_09', 'T25_10', 'T25_08',

#> 'T22_11', 'T22_02', 'T25_11', 'T25_07', 'T25_14', 'T25_12', 'T25_04',

#> 'T22_08', 'T25_23', 'T22_10', 'T22_06', 'T25_13', 'T22_01'

#> Warning: Model split preparation for model No. 22, model name

#> domainreading__group1.all_group2.countryA: 132 items from 149 items listed the

#> Q matrix not found in data:

#> ℹ 'T07_08', 'T02_02', 'T07_01', 'T02_06', 'T07_05', 'T07_06', 'T02_07',

#> 'T07_02', 'T07_04', 'T02_03', 'T07_09', 'T02_01', 'T02_04', 'T07_03',

#> 'T02_05', 'T07_07', 'T07_10', 'T09_12', 'T01_08', 'T03_07', 'T03_01',

#> 'T03_08', 'T03_05', 'T03_06', 'T03_03', 'T10_08', 'T11_06', 'T11_03',

#> 'T11_01', 'T04_02', 'T04_01', 'T04_06', 'T04_04', 'T11_04', 'T10_01',

#> 'T11_08', 'T10_02', 'T10_09', 'T10_07', 'T11_02', 'T04_05', 'T04_03',

#> 'T11_05', 'T10_06', 'T11_10', 'T11_09', 'T04_07', 'T10_10', 'T04_09',

#> 'T04_08', 'T11_0X', 'T06_05', 'T05_03', 'T05_06', 'T05_05', 'T06_02',

#> 'T06_04', 'T06_03', 'T05_04', 'T05_02', 'T05_01', 'T06_01', 'T08_04',

#> 'T08_06', 'T08_03', 'T08_07', 'T08_02', 'T08_05', 'T08_01', 'T08_09',

#> 'T08_08', 'T03_09', 'T06_1X', 'T26_03', 'T26_05', 'T26_07', 'T26_09',

#> 'T26_06', 'T26_10', 'T26_08', 'T26_02', 'T26_04', 'T27_01', 'T27_05',

#> 'T27_07', 'T27_06', 'T27_04', 'T27_02', 'T27_03', 'T24_05', 'T24_08',

#> 'T24_02', 'T24_10', 'T24_06', 'T24_24', 'T24_25', 'T24_09', 'T24_21',

#> 'T24_23', 'T23_10', 'T23_13', 'T23_09', 'T23_15', 'T23_03', 'T23_04',

#> 'T23_14', 'T23_07', 'T23_08', 'T23_02', 'T23_06', 'T23_05', 'T25_05',

#> 'T25_22', 'T22_03', 'T25_06', 'T22_12', 'T25_09', 'T25_10', 'T25_08',

#> 'T22_11', 'T22_02', 'T25_11', 'T25_07', 'T25_14', 'T25_12', 'T25_04',

#> 'T22_08', 'T25_23', 'T22_10', 'T22_06', 'T25_13', 'T22_01'

#> Warning: Model split preparation for model No. 23, model name

#> domainreading__group1.all_group2.countryB: 132 items from 149 items listed the

#> Q matrix not found in data:

#> ℹ 'T07_08', 'T02_02', 'T07_01', 'T02_06', 'T07_05', 'T07_06', 'T02_07',

#> 'T07_02', 'T07_04', 'T02_03', 'T07_09', 'T02_01', 'T02_04', 'T07_03',

#> 'T02_05', 'T07_07', 'T07_10', 'T09_12', 'T01_08', 'T03_07', 'T03_01',

#> 'T03_08', 'T03_05', 'T03_06', 'T03_03', 'T10_08', 'T11_06', 'T11_03',

#> 'T11_01', 'T04_02', 'T04_01', 'T04_06', 'T04_04', 'T11_04', 'T10_01',

#> 'T11_08', 'T10_02', 'T10_09', 'T10_07', 'T11_02', 'T04_05', 'T04_03',

#> 'T11_05', 'T10_06', 'T11_10', 'T11_09', 'T04_07', 'T10_10', 'T04_09',

#> 'T04_08', 'T11_0X', 'T06_05', 'T05_03', 'T05_06', 'T05_05', 'T06_02',

#> 'T06_04', 'T06_03', 'T05_04', 'T05_02', 'T05_01', 'T06_01', 'T08_04',

#> 'T08_06', 'T08_03', 'T08_07', 'T08_02', 'T08_05', 'T08_01', 'T08_09',

#> 'T08_08', 'T03_09', 'T06_1X', 'T26_03', 'T26_05', 'T26_07', 'T26_09',

#> 'T26_06', 'T26_10', 'T26_08', 'T26_02', 'T26_04', 'T27_01', 'T27_05',

#> 'T27_07', 'T27_06', 'T27_04', 'T27_02', 'T27_03', 'T24_05', 'T24_08',

#> 'T24_02', 'T24_10', 'T24_06', 'T24_24', 'T24_25', 'T24_09', 'T24_21',

#> 'T24_23', 'T23_10', 'T23_13', 'T23_09', 'T23_15', 'T23_03', 'T23_04',

#> 'T23_14', 'T23_07', 'T23_08', 'T23_02', 'T23_06', 'T23_05', 'T25_05',

#> 'T25_22', 'T22_03', 'T25_06', 'T22_12', 'T25_09', 'T25_10', 'T25_08',

#> 'T22_11', 'T22_02', 'T25_11', 'T25_07', 'T25_14', 'T25_12', 'T25_04',

#> 'T22_08', 'T25_23', 'T22_10', 'T22_06', 'T25_13', 'T22_01'

#> Warning: Model split preparation for model No. 24, model name

#> domainreading__group1.all_group2.all: 132 items from 149 items listed the Q

#> matrix not found in data:

#> ℹ 'T07_08', 'T02_02', 'T07_01', 'T02_06', 'T07_05', 'T07_06', 'T02_07',

#> 'T07_02', 'T07_04', 'T02_03', 'T07_09', 'T02_01', 'T02_04', 'T07_03',

#> 'T02_05', 'T07_07', 'T07_10', 'T09_12', 'T01_08', 'T03_07', 'T03_01',

#> 'T03_08', 'T03_05', 'T03_06', 'T03_03', 'T10_08', 'T11_06', 'T11_03',

#> 'T11_01', 'T04_02', 'T04_01', 'T04_06', 'T04_04', 'T11_04', 'T10_01',

#> 'T11_08', 'T10_02', 'T10_09', 'T10_07', 'T11_02', 'T04_05', 'T04_03',

#> 'T11_05', 'T10_06', 'T11_10', 'T11_09', 'T04_07', 'T10_10', 'T04_09',

#> 'T04_08', 'T11_0X', 'T06_05', 'T05_03', 'T05_06', 'T05_05', 'T06_02',

#> 'T06_04', 'T06_03', 'T05_04', 'T05_02', 'T05_01', 'T06_01', 'T08_04',

#> 'T08_06', 'T08_03', 'T08_07', 'T08_02', 'T08_05', 'T08_01', 'T08_09',

#> 'T08_08', 'T03_09', 'T06_1X', 'T26_03', 'T26_05', 'T26_07', 'T26_09',

#> 'T26_06', 'T26_10', 'T26_08', 'T26_02', 'T26_04', 'T27_01', 'T27_05',

#> 'T27_07', 'T27_06', 'T27_04', 'T27_02', 'T27_03', 'T24_05', 'T24_08',

#> 'T24_02', 'T24_10', 'T24_06', 'T24_24', 'T24_25', 'T24_09', 'T24_21',

#> 'T24_23', 'T23_10', 'T23_13', 'T23_09', 'T23_15', 'T23_03', 'T23_04',

#> 'T23_14', 'T23_07', 'T23_08', 'T23_02', 'T23_06', 'T23_05', 'T25_05',

#> 'T25_22', 'T22_03', 'T25_06', 'T22_12', 'T25_09', 'T25_10', 'T25_08',

#> 'T22_11', 'T22_02', 'T25_11', 'T25_07', 'T25_14', 'T25_12', 'T25_04',

#> 'T22_08', 'T25_23', 'T22_10', 'T22_06', 'T25_13', 'T22_01'

#>

#>

#> ========================================================================

#> Model No. 1

#> Model name: domainlistening__group1.female_group2.countryC

#> Number of items: 16

#> Number of persons: 20

#> Number of dimensions: 1

#> ========================================================================

#>

#> Following 118 item(s) missed in data frame will be removed from Q matrix:

#> T12_11, T13_15, T15_03, T13_04, T13_07, T13_16, T13_01, T15_02, T15_01, T15_15, T15_16, T15_09, T13_12, T13_13, T15_14, T13_08, T13_06, T15_12, T13_10, T13_17, T15_11, T13_05, T13_09, T15_10, T15_13, T15_17, T15_18, T13_18, T16_08, T16_09, T16_11, T16_13, T16_01, T16_04, T16_10, T16_02, T16_12, T16_06, T16_07, T19_06, T19_08, T19_05, T19_11, T19_01, T19_02, T19_30, T19_03, T19_07, T19_04, T19_09, T21_11, T18_01, T21_01, T18_05, T21_12, T18_13, T21_10, T18_11, T21_05, T21_13, T18_08, T18_07, T21_09, T18_04, T18_02, T21_02, T21_06, T18_09, T21_04, T18_12, T21_14, T21_03, T18_10, T30_06, T29_04, T29_02, T30_02, T30_03, T30_01, T29_05, T29_03, T28_08, T28_09, T28_02, T28_03, T28_04, T28_01, T28_10, T28_06, T20_05, T20_09, T20_30, T20_08, T20_12, T20_06, T20_07, T20_02, T20_13, T20_03, T20_01, T20_04, T20_11, T20_10, T32_04, T31_03, T31_01, T31_04, T32_03, T32_02, T32_01, T17_02, T17_09, T17_10, T17_04, T17_03, T17_08, T17_06, T17_01

#> Warning: 16 testitem(s) with less than 50 valid responses: These items are nevertheless kept in the data set: 'T12_06', 'T12_07', 'T14_03', 'T14_06', 'T12_01', 'T12_03', 'T12_08', 'T12_10', 'T14_02', 'T14_05', 'T12_02', 'T12_04', 'T14_04', 'T12_05', 'T14_01', 'T12_09'

#> Warning: 2 testitem(s) are constants. Remove these items from the data set:

#> Item 'T14_04', only value '0' occurs: 20 valid responses.

#> Item 'T12_05', only value '1' occurs: 20 valid responses.

#> Remove 2 test item(s) overall.

#> Dataset is completely linked.

#> 'gauss' has been chosen for estimation method. Number of nodes was not explicitly specified. Set nodes to 20.

#> Following 14 items with less than 50 item responses:

#> item.name cases Missing valid item.p

#> factor.T12_06.1.1 T12_06 20 0 20 0.50

#> factor.T12_07.1.1 T12_07 20 0 20 0.75

#> factor.T14_03.1.1 T14_03 20 0 20 0.10

#> factor.T14_06.1.1 T14_06 20 0 20 0.35

#> factor.T12_01.1.1 T12_01 20 0 20 0.90

#> factor.T12_03.1.1 T12_03 20 0 20 0.90

#> factor.T12_08.1.1 T12_08 20 0 20 0.80

#> factor.T12_10.1.1 T12_10 20 0 20 0.70

#> factor.T14_02.1.1 T14_02 20 0 20 0.15

#> factor.T14_05.1.1 T14_05 20 0 20 0.30

#> factor.T12_02.1.1 T12_02 20 0 20 0.50

#> factor.T12_04.1.1 T12_04 20 0 20 0.70

#> factor.T14_01.1.1 T14_01 20 0 20 0.15

#> factor.T12_09.1.1 T12_09 20 0 20 0.95

#> Q matrix specifies 1 dimension(s).

#>

#>

#> ========================================================================

#> Model No. 2

#> Model name: domainlistening__group1.female_group2.countryA

#> Number of items: 16

#> Number of persons: 42

#> Number of dimensions: 1

#> ========================================================================

#>

#> Following 118 item(s) missed in data frame will be removed from Q matrix:

#> T12_11, T13_15, T15_03, T13_04, T13_07, T13_16, T13_01, T15_02, T15_01, T15_15, T15_16, T15_09, T13_12, T13_13, T15_14, T13_08, T13_06, T15_12, T13_10, T13_17, T15_11, T13_05, T13_09, T15_10, T15_13, T15_17, T15_18, T13_18, T16_08, T16_09, T16_11, T16_13, T16_01, T16_04, T16_10, T16_02, T16_12, T16_06, T16_07, T19_06, T19_08, T19_05, T19_11, T19_01, T19_02, T19_30, T19_03, T19_07, T19_04, T19_09, T21_11, T18_01, T21_01, T18_05, T21_12, T18_13, T21_10, T18_11, T21_05, T21_13, T18_08, T18_07, T21_09, T18_04, T18_02, T21_02, T21_06, T18_09, T21_04, T18_12, T21_14, T21_03, T18_10, T30_06, T29_04, T29_02, T30_02, T30_03, T30_01, T29_05, T29_03, T28_08, T28_09, T28_02, T28_03, T28_04, T28_01, T28_10, T28_06, T20_05, T20_09, T20_30, T20_08, T20_12, T20_06, T20_07, T20_02, T20_13, T20_03, T20_01, T20_04, T20_11, T20_10, T32_04, T31_03, T31_01, T31_04, T32_03, T32_02, T32_01, T17_02, T17_09, T17_10, T17_04, T17_03, T17_08, T17_06, T17_01

#> Warning: 16 testitem(s) with less than 50 valid responses: These items are nevertheless kept in the data set: 'T12_06', 'T12_07', 'T14_03', 'T14_06', 'T12_01', 'T12_03', 'T12_08', 'T12_10', 'T14_02', 'T14_05', 'T12_02', 'T12_04', 'T14_04', 'T12_05', 'T14_01', 'T12_09'

#> Warning: 1 testitem(s) are constants. Remove these items from the data set:

#> Item 'T12_05', only value '1' occurs: 42 valid responses.

#> Remove 1 test item(s) overall.

#> 1 subject(s) do not solve any item:

#> P02289 (15 false), P02289 (15 false) ...

#> Dataset is completely linked.

#> 'gauss' has been chosen for estimation method. Number of nodes was not explicitly specified. Set nodes to 20.

#> Following 15 items with less than 50 item responses:

#> item.name cases Missing valid item.p

#> factor.T12_06.1.1 T12_06 42 0 42 0.4762

#> factor.T12_07.1.1 T12_07 42 0 42 0.7381

#> factor.T14_03.1.1 T14_03 42 0 42 0.1190

#> factor.T14_06.1.1 T14_06 42 0 42 0.3810

#> factor.T12_01.1.1 T12_01 42 0 42 0.9762

#> factor.T12_03.1.1 T12_03 42 0 42 0.9048

#> factor.T12_08.1.1 T12_08 42 0 42 0.6190

#> factor.T12_10.1.1 T12_10 42 0 42 0.7857

#> factor.T14_02.1.1 T14_02 42 0 42 0.1667

#> factor.T14_05.1.1 T14_05 42 0 42 0.3095

#> factor.T12_02.1.1 T12_02 42 0 42 0.5714

#> factor.T12_04.1.1 T12_04 42 0 42 0.7619

#> factor.T14_04.1.1 T14_04 42 0 42 0.0476

#> factor.T14_01.1.1 T14_01 42 0 42 0.0714

#> factor.T12_09.1.1 T12_09 42 0 42 0.9048

#> Q matrix specifies 1 dimension(s).

#>

#>

#> ========================================================================

#> Model No. 3

#> Model name: domainlistening__group1.female_group2.countryB

#> Number of items: 16

#> Number of persons: 27

#> Number of dimensions: 1

#> ========================================================================

#>

#> Following 118 item(s) missed in data frame will be removed from Q matrix:

#> T12_11, T13_15, T15_03, T13_04, T13_07, T13_16, T13_01, T15_02, T15_01, T15_15, T15_16, T15_09, T13_12, T13_13, T15_14, T13_08, T13_06, T15_12, T13_10, T13_17, T15_11, T13_05, T13_09, T15_10, T15_13, T15_17, T15_18, T13_18, T16_08, T16_09, T16_11, T16_13, T16_01, T16_04, T16_10, T16_02, T16_12, T16_06, T16_07, T19_06, T19_08, T19_05, T19_11, T19_01, T19_02, T19_30, T19_03, T19_07, T19_04, T19_09, T21_11, T18_01, T21_01, T18_05, T21_12, T18_13, T21_10, T18_11, T21_05, T21_13, T18_08, T18_07, T21_09, T18_04, T18_02, T21_02, T21_06, T18_09, T21_04, T18_12, T21_14, T21_03, T18_10, T30_06, T29_04, T29_02, T30_02, T30_03, T30_01, T29_05, T29_03, T28_08, T28_09, T28_02, T28_03, T28_04, T28_01, T28_10, T28_06, T20_05, T20_09, T20_30, T20_08, T20_12, T20_06, T20_07, T20_02, T20_13, T20_03, T20_01, T20_04, T20_11, T20_10, T32_04, T31_03, T31_01, T31_04, T32_03, T32_02, T32_01, T17_02, T17_09, T17_10, T17_04, T17_03, T17_08, T17_06, T17_01

#> Warning: 16 testitem(s) with less than 50 valid responses: These items are nevertheless kept in the data set: 'T12_06', 'T12_07', 'T14_03', 'T14_06', 'T12_01', 'T12_03', 'T12_08', 'T12_10', 'T14_02', 'T14_05', 'T12_02', 'T12_04', 'T14_04', 'T12_05', 'T14_01', 'T12_09'

#> Dataset is completely linked.

#> 'gauss' has been chosen for estimation method. Number of nodes was not explicitly specified. Set nodes to 20.

#> Following 16 items with less than 50 item responses:

#> item.name cases Missing valid item.p

#> factor.T12_06.1.1 T12_06 27 0 27 0.222

#> factor.T12_07.1.1 T12_07 27 0 27 0.852